Development, Analysis And Research

by Andrew Johnstone

At Everlution we did a hack day to help motivate developers and to get the team working together. We started at 8.30am and finished at 5pm. It was pretty impressive what all the teams managed to achieve, with four teams of two developers and a designer.

It was set out in a competition style event with all teams competing against each other with a defined set of points for each task achieved. The tasks were very ambitious considering we had a day to complete the task.

The key task was to analyse a webcam and determine whether a meeting room was in use or not by analysing the web cams jpeg stream.

The motivation for which is that many staff members book meeting rooms and don’t use them or randomly hijack meeting rooms.

Task sheet/Points

| # | Task | Points* |

|---|---|---|

| 1 | To build a webpage that tells the user if the boardroom is in use or not by parsing the JPEG stream emitted from the webcam. Scoring:

|

|

| 2 | To use a Face detection and recognition library to say whether one member of your team is in the Boardroom or not. |

|

| 3 | To build an MSN or Skype Bot that will answer a natural language question on whether the Boardroom is in use or not. Must be able to handle at least 3 variations of how question could be asked. Scoring:

|

|

| 4 | To build a native mobile app that will display same information. Scoring:

|

|

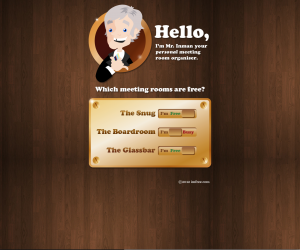

| 5 | To come up with a name, brand/logo and design for the service. Scoring:

|

|

| 6 | Bonus: Good team work |

|

| 7 | Bonus: Quality, reliability and elegance of solution |

|

| 8 | Sabotaging another team’s effort, being disruptive, not participating in spirit of competition, etc. |

|

With regards to “Detection system to not trigger with someone going in/out of Studio 2″. There is a door going into the boardroom and a door just outside going into another office of Everlution. So any body outside the glass should not be detected going into that office.

The implementations varied drastically between teams, some based on motion.

Team 1 – My Team:

Used a C# client application to detect motion in real time from the remote image and/or directly attached camera using AForge.

The sample client was more than enough and simply ran a timed event to push the stats across over http to a PHP based API and store within a MySQL database. The web page then polled periodically to check the availability from the MySQL database, which produces the following data set. If the motion_level was above 0.02 it would indicate the room is occupied. This histogram is indicated at the bottom of the image stream.

The main issue was that it took me till 11am to install Visual Studio. Additionally the implementation was improved by adjusting the motion detection method depending on whether the stream was sourced from the camera itself or by the image, which was updated every two seconds.

{

"boardroom" : {

"data" : {

"date_created" : "2012-07-24 00:14:19",

"name" : "boardroom",

"signal_time" : "24/07/2012 00:14:20",

"fqdn" : "HP28458242993",

"motion_level" : "0.000406901",

"detected_objects_count" : "-1",

"has_motion_been_detected" : "",

"id" : "19945",

"motion_alarm_level" : "0.015",

"flash" : "0"

},

"last_used" : {

"date_created" : "2012-07-24 00:14:19",

"name" : "boardroom",

"signal_time" : "24/07/2012 00:14:20",

"fqdn" : "HP28458242993",

"motion_level" : "0.000406901",

"detected_objects_count" : "-1",

"has_motion_been_detected" : "",

"id" : "19945",

"motion_alarm_level" : "0.015",

"flash" : "0"

},

"is_free" : true

}

}

AForge is an excellent library and provides the following…

- AForge.Imaging – library with image processing routines and filters

- AForge.Vision – computer vision library

- AForge.Neuro – neural networks computation library

- AForge.Genetic – evolution programming library

- AForge.Fuzzy – fuzzy computations library

- AForge.MachineLearning – machine learning library

- AForge.Robotics – library providing support of some robotics kits

- AForge.Video – set of libraries for video processing

In order to achieve face detection, I was going to use OpenCV, however ran out of time to fully implement. As such we implemented face.com to attempt face recognition. Unfortunately for all implementations this API failed to recognise anyone.

Additionally an MSN bot was built on put on top although did not implement any real natural language implementation.

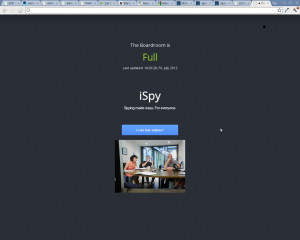

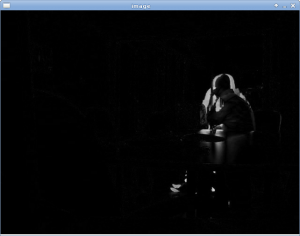

The results in action

Team 2:

The implementation was based on PHP Imagick using edge detection by enhancing the images with edgeImage and creating composite images.

Two sets of metrics were extracted for statistical analysis from the image. For all channels the mean, stddev from each image used (The raw image, the composite). A histogram was also generated for each channel with the std dev, variance and skew.

Team 2 were to use two important metrics that they did not manage to implement, however their intention was too…

- Edge density (from http://ro.uow.edu.au/cgi/viewcontent.cgi?article=1517&context=infopapers) , and

- Image energy ( I would calculate then total amount, standard deviation etc.

- All that after pre filtering with Sobel operator – http://www.win.tue.nl/~wstahw/2IV05/seamcarving.pdf)

These stats were then passed to Quinlan’s C4.5 and provided with some training pictures with people in the room or not.

The idea behind applying edge density & energy analysis was for the algorithm to learn about the room’s layout… i.e. moving a chair would not change density nor energy a lot, but adding another one would do, so theoretically this approach should learn about objects inside the room. Filtering by edge density to detect features would remove unimportant (not dense) objects such as chairs or people being too far away.

If C4.5 used that algorithm with enough data with people and other objects, it would learn itself about how to distinguish between a face and a chair.

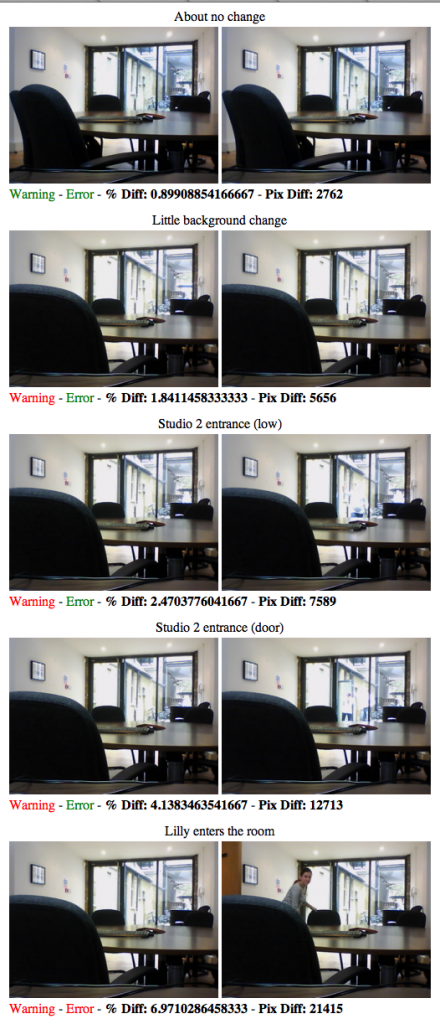

Team 3:

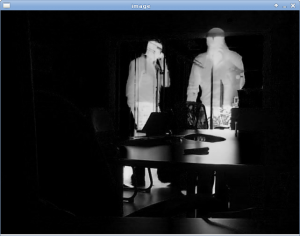

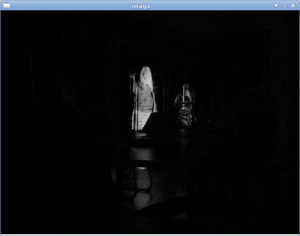

Wrote their implementation using python with bindings for OpenCV. Initially loading the image creating 3 grayscale images.

- Image 1: Last board room image

- Image 2: The current board room image

- Image 3: The AbsDiff between Image 1 and Image 2

Using ImageChops.difference the stats for the difference are stored in sqlite and divided by an average. If the condition is met it will mark the room as being occupied.

Snapshots from OpenCV can be seen below.

|

|

|

|

Team 4:

Used PHPs Imagick extension and the following function http://robert-lerner.com/imagecompare.php. They ran a series of slices whether someone was in the room and determined that if there was a 5% variance the meeting room was occupied.

The results in action

Other references

C Sharp / C / C++

Multiple face detection and recognition in real time

Playing Card Recognition Using AForge.Net Framework

OpenCV

Comment Form

About this blog

I have been a developer for roughly 10 years and have worked with an extensive range of technologies. Whilst working for relatively small companies, I have worked with all aspects of the development life cycle, which has given me a broad and in-depth experience.

Archives

- September 2020

- December 2015

- June 2015

- May 2015

- October 2014

- April 2013

- October 2012

- September 2012

- July 2012

- January 2012

- December 2011

- June 2011

- February 2011

- January 2011

- December 2010

- July 2010

- May 2010

- April 2010

- January 2010

- December 2009

- October 2009

- June 2009

- February 2008

- July 2007

- June 2007

- April 2007

- December 2006

- October 2006

- August 2006

- July 2006

- April 2006

- March 2006

- February 2006

- January 2006

- December 2005

- November 2005

- October 2005

- August 2005

- July 2005

- June 2005

- March 2005

- June 2004

- Eugene: I've just hit exactly the same issue. Would have been be nice if Amazon mentioned it in documentatio [...]

- Ozzy: Thanks Andy. This was useful (: [...]

- shahzaib: Nice post . I have a question regarding BGP. As you said, we'll need to advertise our route from mul [...]

- Steve: Hi there… sorry that this is old, but I’m trying to use your script to check for my u [...]

- John Cannon: Hey that was really needful. Thanks for sharing. I’ll surely be looking for more. [...]

- Istio EnvoyFilter to add x-request-id to all responses

- Amazon ELB – monitoring packet count and byte size with Amazon Cloudwatch and VPC flow logs

- SSL – Amazon ELB Certificates

- Automatically update Amazon ELB SSL Negotiation Policies

- Amazon Opsworks – Dependent on Monit

- Awk craziness: Processing log files

- Elastic Search Presentation

- Requests per second from Apache logs

- Hackday @ Everlution

- apt-get unattended / non-interactive installation